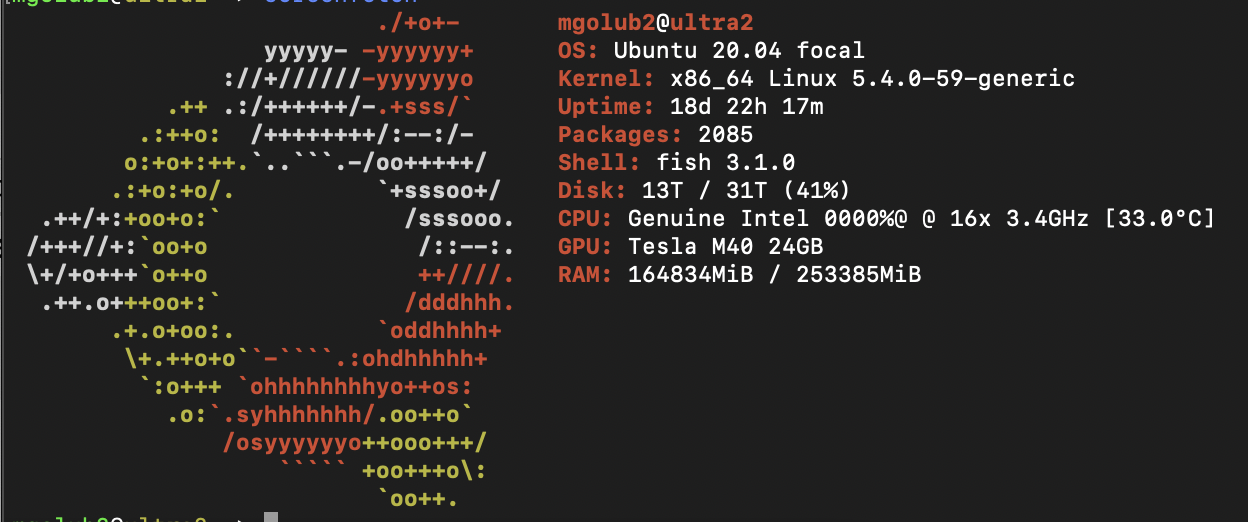

Datahoarding and ML Server Build

My home server is a little bit silly. It's certainly not as over the top as some builds on the DataHoarder subreddit, but I think it's a neat little build. It's evolved a lot over the years, and I've tried to document a bit of it hear so other people can get ideas from it.

Hardware

As a side effect of a past job at Silicon Mechanics, I gained a not so minor affection for real sever grade hardware. No core i7's or Ryzen's here, instead we have the following hardware:

Motherboard |

Supermicro X11-SPM-F |

CPU |

Intel Xeon Gold 5217 8 cores @2.8GHz |

RAM |

4x 16GB Samsung 2133MHz ECC RDIMM |

RAM |

2x 128GB Intel 2666MHz ECC DCPMM |

GPU |

Nvidia Tesla M40 24GB :D |

HBA |

LSI SAS3008 SAS3 |

Boot SSD |

Samsung SSD 950 PRO 512GB |

Storage SSD |

Fusion ioScale 3.20TB MLC SSD |

HDD |

2x 12TB ZFS Mirrors, 1x6TB ZFS Mirror |

Case |

Phanteks Eclipse P600S |

Overall it's a pretty decent system for everything I ask of it! I've had no issue other than a RAM stick going bad, which was quickly caught by ECC and noted in the IPMI interface of the X11 board. While Supermicro motherboards may be basic, they are rarely buggy in ways that are showstoppers. This is very different than my personal experience with Asrock, EVGA, or gigabyte boards I've used in various systems that all had weird issues that would appear after running them for long periods, or just randomly. Also, I really really like not having to plug in a monitor and keyboard to interface with the system, including for power/reset. Though these days there is now Pi-KVM at least in place of a real IPMI.

GPU

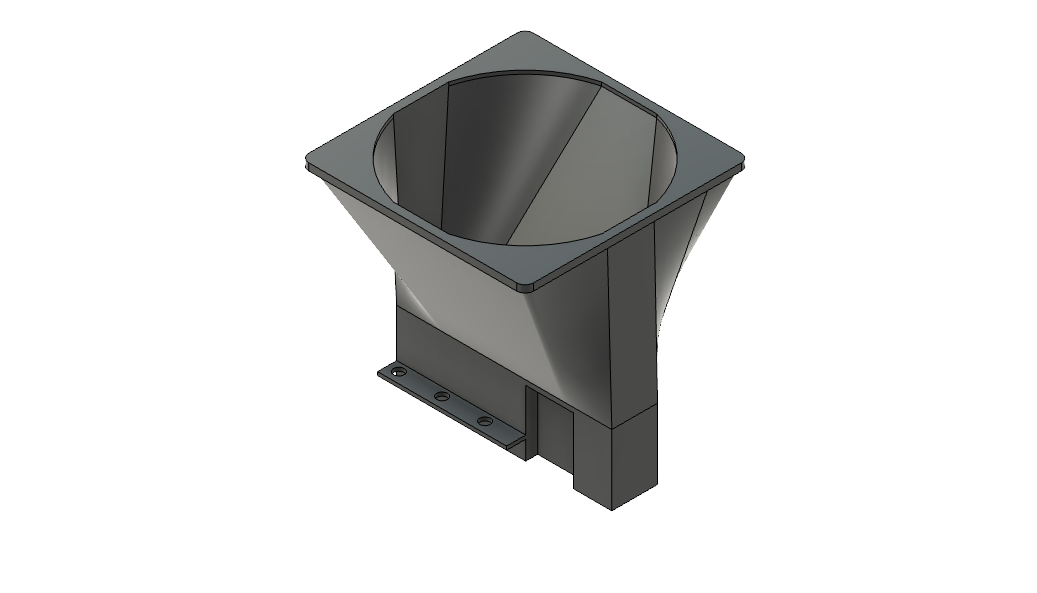

The M40 GPU took some work to cool down properly, and in some training runs it still starts to throttle down. The GPU came from eBay (as did most of the parts of this system) without a fan, and orignally I printed out this model from Thingiverse. It actually worked really well, but was too unbearably loud. I was unhappy with the other models I could find online, so I designed my own 3D simple fan attachment funnel:

It's also not the fastest deep learning card out there by a long shot, but I wanted to research more into transformer models, and it was by far the cheapest way to get 24GB of VRAM - I paid ~200 for mine! Performance wise, It achieves about 4300GFLOPS in the gpu-burn testing application I like using. In terms of deep learning performance, using the data from Lambada Labs to compare, the M40 is XX% slower running the transformerxlbase model.

Memory

The memory setup on my system is a bit different than most. The memory on my sever is split between 64GB of standard error correcting registered memory, typical for servers, and 256GB of Optane flash memory in two DIMM slots. Optane is a pretty cool technology - unlike current consumer SSDs, Optane works really well at low queue depths, the number of outstanding operations. Normal SSDs that use even single level flash have to queue many operations in parallel to achieve good performance, Optane is able to get great performance (fast and low latency) with just one operation.

What this enabled beyond really awesome SSDs is the Datacenter persistent memory module, or DCPMM. Intel developed the DCPMM which puts Optane flash modules and a controller on the DDR4 bus as either bulk memory for the computer, or in several direct access modes. Unfortunately Optane seems to be a consumer failure though, as Intel has canceled all future consumer targeted Optane products. It was a very inexpensive way to transparently get a lot of performance from ZFS by having lots of RAM, without spending a lot of money.

FusionIO SSD

All the other hardware is pretty standard and boring, though the bulk storage SSD is somewhat interesting - it's an old FusionIO SSD that uses a FPGA and a bunch of flash chips to implement a very fast SSD, even considering available SSDs now. For example, it gets up to 345K/385K IOPS R/W. It doesn't slow down much as it fills past 90%, unlike modern SSDs, and uses MLC, not TLC, which gives it much more well-rounded performance in tail-end scenarios. Note these are note NVME and getting them working in Ubuntu 20.04 requires using this driver.

Software

Section Coming in the Future!

What Does it Do?

I use the server in many different ways:

It runs a NFS fileserver serving my laptop and desktop with bulk storage

Manages my condo's wireless lights and switches via the excellent homebridge project